This is the fifth and final blog post from the Making an Impact workshops we ran in August. Jan Holden (Head of Service, Norfolk Library and Information Service) and Nick Little (Community Librarian - Evaluation) talked about Norfolk’s evaluation tool: IMPACT. The full set of slides is available, but we’ve summarised the highlights and included answers to some of the questions that were asked during the workshops in this post.

Norfolk wanted to embed the use of evaluation in the library service right at the beginning with the planning process. Their chosen model needed to stand up to other evaluation methods, and recognise that people weren’t conducting evaluations because they didn’t know what was needed with no common process to follow.

What has changed?

Staff used to use open forms for customers, which didn’t have any links to council outcomes. A good potential source of anecdotes, but little more. For example, a comment from the old form above reads "Brilliant class. Very fun. Made an unusual poem. But very enjoyable :)"

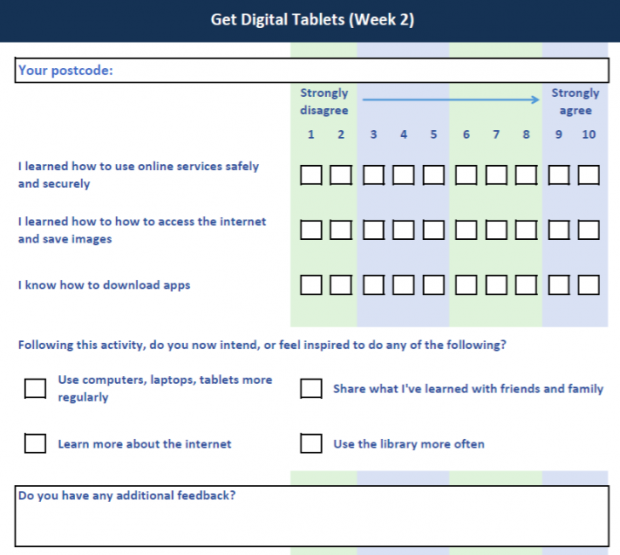

Now the form comes directly from the Impact tool, and everything is structured around outcomes.

So, what is the Impact tool?

Jan described Impact as a simple and quick evaluation framework that can be used to evaluate soft outcomes. The process been developed into a piece of software that can potentially be used by anyone - local authorities, medium and large organisations, and community groups. It was created jointly by Norfolk library and information service, and shared with Norfolk City Council.

The tool uses a series of evaluative statements, which are linked (behind the scenes) directly to outcomes, so the end result assesses the overall value of interventions. These statements are mapped against the priorities of the local authority. The tool is flexible, so additional criteria can be added - for example if a funder wants to measure something specific.

Impact has SMART outcomes in order to future proof it, as council priorities do change frequently. In this way is evaluates outcomes relating to big outcome areas such as skills, learning, leisure, wellbeing and health and more local ones like people’s sense of community, the environment, equality and diversity and places people live. Further development of the tool will create highly specified outcomes that can be linked to beneficiary groups.

Outcomes for courses or events which the library services run are agreed at the beginning, this is what is then measured against through the forms.

Frequency of evaluation will depend on the activity:

- regular/weekly activities are evaluated once every three months or so

- if the activity is a programme which will roll out over a longer period of time, evaluation will be done at the start, to provide a baseline, then at the end to see if outcomes have been achieved

- one-off activities are always evaluated

- annual events can also be evaluated, and reports will cumulate, providing a timeline of how things have changed

The form

Members of staff need to know what outcome(s) are being measured before they can generate a form.

Forms are then handed out to users to fill in at events or courses. Nick mentioned how important it was to positively introduce the form so people are brought into why they’re filling it out. Norfolk focus on the benefit to the user - "we need to know if what we’re doing is working, to stop what doesn’t work and repeat what does, but you need to tell us." Staff then input the data from the forms into the tool.

Users are asked to say how much they agree with a statement (one of the primary outcomes) using a 10 point scale and can also provide an evaluative statement. People asked about the decision to use a 1 to 10 scale - and the main advice was never to use an odd number, as people tend to pick a response in the middle. Norfolk use a 1 to 10 scale as they found it to be most commonly used by other organisations.

Evaluation outputs

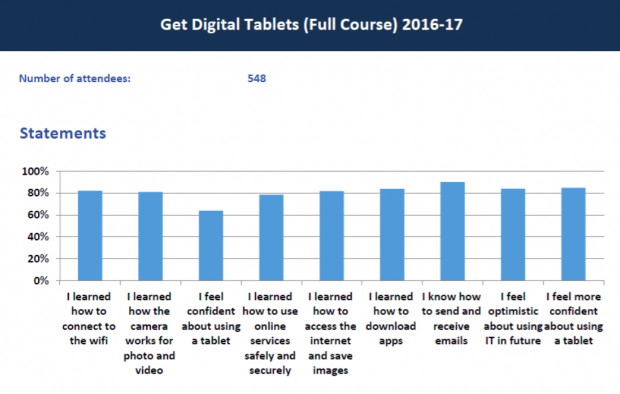

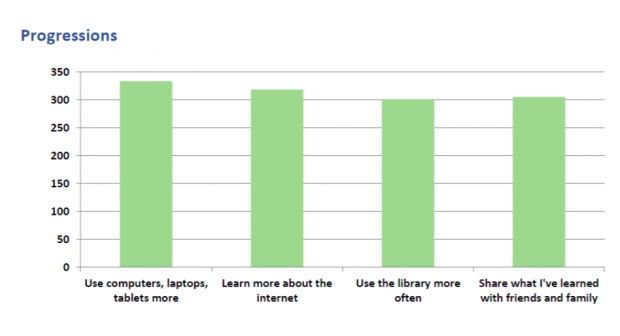

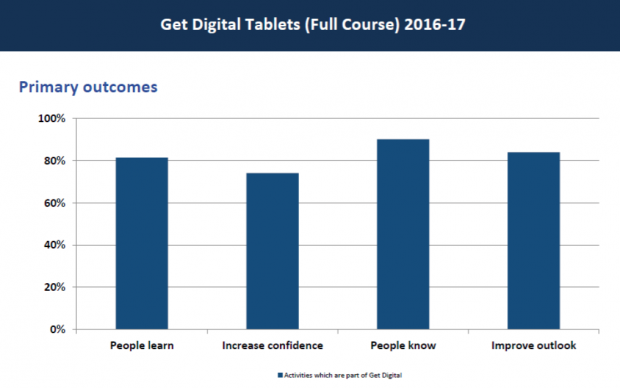

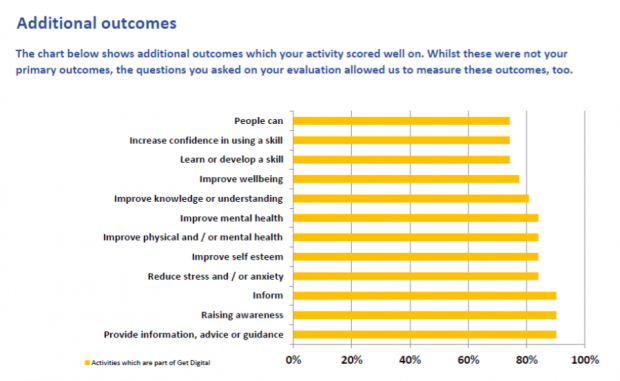

The tool can produce the data in a variety of ways including with reports on: statements, progressions, primary outcomes and additional outcomes. Comparisons can be made over time, between locations and against outcomes. Below are some examples of these reports that can be generated, providing easily digestible outputs following events. The examples are all from a Get Digital Tablets course.

Reports are only produced when needed and can be used for funders, in response to requests for information or to prove library impact to colleagues in the council.

The main benefits so far have been:

- time saved

- being able to consistently and systematically evaluate regular activities which the library service does around the country (for example rhyme times)

- a new focus on the outcomes of activities - what they’re trying to achieve - at the design stage of activities

- proving the case for libraries and helping to place them as a central part of the local service strategy

Practical questions

After the presentation, workshop participants were given some practical examples of how the tool worked, which generated lots of questions - both about the tool itself, and how it was used.

People asked about the decision to gather the data using paper forms - they wondered if digital input would be quicker. The Norfolk team responded that in their experience, they get a better response rate on paper. They recognise that staff need to be trained as to how to introduce the process, but once people see how it works end to end, they can see the value of it. Setting the statements is only done once, and once they are in the system they can be reused.

Gathering data does have to be input manually, but as people get used to it, they can do it very quickly - and the outputs are generated instantly. “Best way to build confidence in using the system is to make staff use it. The system drives consistency if you know how to use it.” In addition, the data input itself is quite quick, the design of the form helps this as the system restricts what can be included in the forms so they’re never too long.

The system already includes a list of all venues in Norfolk that activities can possibly take place in. This took work up front to set up, but means that staff using the tool can pick from a list, thus avoiding different spellings / names for the same place, which would skew results, and make comparisons or aggregating data difficult.

Future plans

Currently, Impact is being used by the library service, but the intention is to make it available more widely. It will be web-based, and marketed by agreement with Norwich City Council. It will be available to buy, or free to community groups and should be available from next year.

If you would like more information on their plans please email janet.holden@norfolk.gov.uk.

To read all the posts relating to this workshop, search: #LibrariesImpactMeasurement